Multiple processor scheduling or multiprocessor scheduling focuses on designing the scheduling function for the system which is consist of ‘more than one processor’. With multiple processors in the system, the load sharing becomes feasible but it makes scheduling more complex.

As there is no policy or rule which can be declared as the best scheduling solution to a system with a single processor. Similarly there no best scheduling solution for a system with multiple processors as well.

In this section, we will be discussing the multiprocessor system along with the keynotes that must be considered while scheduling the system with multiple processors.

What is Multiprocessor?

A multiprocessor is a system with several processors. Well with the presence of multiple processors it becomes complex to design a scheduling algorithm. The multiprocessor system can be categorized into:

1. Loosely Coupled or Distributed Multiprocessor

Multiple processors in the system are independent of each other. Each processor in the system has its own memory and I/O channels.

2. Functionally Specialized Processor

In this system, among the collection of multiple processors, there is a master processor which is a general-purpose processor. This master processor controls the other specialized processors in the system and provides services to them.

3. Tightly Coupled Multiprocessors

In this system, the processors are under the integrated control of the operating system. All the processor in this system shares the common memory. These processors are sometimes also termed as homogeneous as they are identical in terms of their functionality.

Keynotes of Multiple Processor Scheduling

Note: The multiprocessing system we are considering have the identical or homogeneous processors in terms of functionality.

Techniques of multiprocessor Scheduling

Multiprocessor scheduling can be done in two ways asymmetric multiprocessor scheduling and symmetric multiprocessor scheduling.

In asymmetric multiprocessor scheduling, one processor is assigned as the master processor which handles all the decisions related to scheduling, along with I/O processing and other system activities. It the master processor which runs the operating systems code and the other slave processors only execute the user code.

In symmetric multiprocessor scheduling, all the processors in the system are self-scheduling. Each processor in the system either has its own list of processes to be executed or there may be a common list of processes from where the processors will extract the process to be executed.

Processor Affinity

Processor affinity i.e. the process has developed an affinity for the processor it is currently running on. Now let us discover why did this happen?

Consider in a multiple processor system a process P1 is running on let’s say processor PR1. While the process P1 is running, the data that it is accessing will get cached in the processor PR1’s cache memory C1. Due to this most of the memory accesses made by the process, P1 will be satisfied by the cache memory C1 of processor PR1.

Now, what if the process P1 is being migrated from processor PR1 to processor PR2?

- The first thing that would happen is the content of cache memory C1 will now be invalid for processor PR1.

- Next, the cache memory C2 of processor PR2 must be populated with the data frequently accessed by the process P1 so that most of the memory access request is satisfied by the cache memory C2.

To avoid the conditions above the symmetric multiprocessing system do not let the processes to migrate from one processor to another and this is referred to as processor affinity. Processor affinity can be defined as the likeness of a process to be on the processor on which it is currently running.

The processor affinity can be further classified into two types soft affinity and hard affinity. When the system has set a strategy not migrate the process from one processor to another but does not guarantee this i.e. if the condition prevails than it can migrate the process from one to another processor this is called soft affinity.

When the system makes it sure that no process would be migrated from one processor to another it is termed as hard affinity. Operation system such as Linux provides some system calls that let the process specify it does not have to migrate from one processor to another.

Load Balancing

On symmetric multiple processor system, all the processor must have an equal workload to get the benefits of multiple processors in the system. If the workload is not balanced properly among all the processors in the system, it might happen that some processors may end up sitting idle and some would have high workload along with the processes in awaiting in CPU.

Load balancing can be implemented on the system with multiple processors, where every processor in the system has its own list of processes to execute.

If the system has a common list containing the processes to execute then there is no need for load balancing. This is because whenever a processor will become idle it will load itself with the process in a common list.

Load balancing can be achieved in two ways i.e. push migration and pull migration.

Push Migration: Here a task is designed which keeps a periodic check on all the processor to identify any imbalance of load. If it finds an imbalance of load then task extracts the load (processes) from an overloaded processor and assign them to the idle or less busy processor. This pushing of processes from the overloaded processor to less busy processor is termed as pushing migration.

Pull Migration: Here the idle processor itself extract a waiting process form an overloaded or busy processor start executing it to balance the load.

The push and pull migration can be implemented in parallel. For example, Linux system runs it push migration in every 200 milliseconds and whenever a processor is found idle it runs it pull migration algorithm to pull processes from overloaded processors.

If observed carefully the load balancing mechanism crosses the benefits of processor affinity. As pushing or pulling a process from one processor to another invalidate content of cache memory as we have seen in processor affinity.

So there is no perfect strategy or rule to decide the best policy for scheduling a system with multiple processors to extract maximum benefit.

Symmetric Multithreading

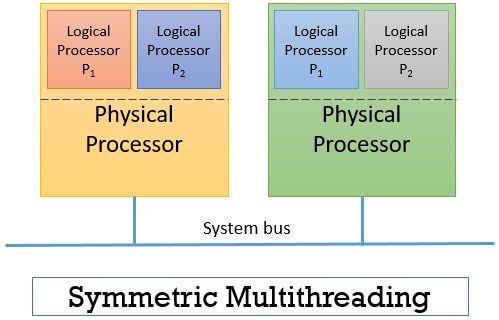

Symmetric multiple processor system has multiple physical processors that allow several threads to execute concurrently. Now the idea is to create and provide logical processors instead of physical processors. This concept of providing logical processors to threads is termed as symmetric multithreading and hyper-threading technology for Intel processors.

The concept of symmetric multithreading allows you to create several logical processors over a single physical processor. The view that an operating system has, have several logical processors where every logical processor has its own architecture state. Each logical processor has its own general-purpose and machine state registers. Each logical processor is capable of handling interrupts and share resources of its physical memory.

The figure above shows you the view of two physical processors where each of them has created two logical processors. Here from the operating system’s view, there are four processors to schedule.

The concept of symmetric multithreading is more of hardware rather than software. It is the hardware which is designed in a way that it could provide architecture state to each logical processor along with interrupt handling. Though the operating system must not be designed differently to achieve the goal of symmetric multithreading. But if OS is designed differently to run on such system then it can give performance gain.

Consider that that in symmetric multiprocessing we have implemented the concept of symmetric multithreading and we have two physical processors and each having two logical processors as in the figure above.

Now both the physical processors are idle. If the operating system is not designed on the concept of symmetric multithreading then it might happen that it would schedule separate threads on two separate logical processors of the same physical processor leaving another physical processor idle.

Instead, it should schedule two separate threads on two different physical processors.

So, at last, we would conclude that though we can gain performance by scheduling the multiprocessing system still there is no best solution for multiprocessing scheduling.

Leave a Reply