Swapping is one of the several methods of memory management. In swapping an idle or a blocked process in the main memory is swapped out to the backing store (disk) and the process that is ready for execution in the disk, is swapped in main memory for execution. We will be discussing swapping in more detail.

As we all know, a process must be placed in main memory for its execution. But, the fact is that we have a limited amount of main memory. The memory needed by all the processes in the system is often more than the main memory we have in the system.

Consider a real-time example:

In Windows operating system, as soon as the system is booted 50-100 process starts executing, these processes do nothing but check for application updates. Such processes take up to 5-10 MB memory space. The other processes start checking for the incoming network, incoming mails and the other things.

This all happens before a user has started any process. A single user application nowadays takes about 500 MB of memory space just to start up. So keeping all these processes in main memory would require a large amount of main memory. And the increasing the size of main memory would increase the cost of the system. To deal with these memory overloads we have come up with the two approaches, swapping and virtual memory.

As for now, we will only discuss swapping in this section.

Swapping

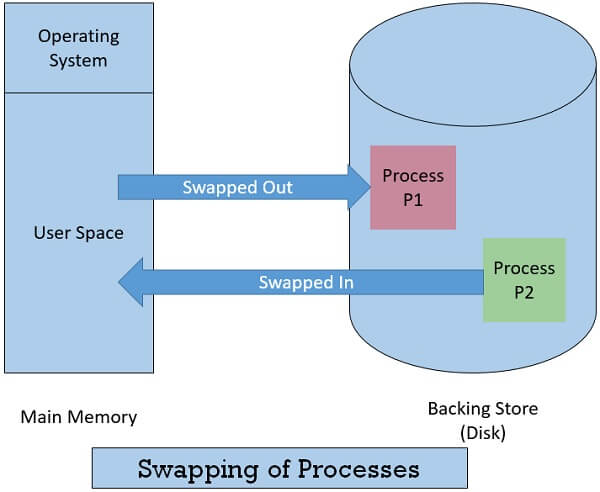

A process must be in the main memory before it starts execution. So, a process that is ready for execution is brought in the main memory. Now, if a running the process gets blocked. The memory manager temporarily swaps out that blocked process on to the disk. This makes the space for another process in the main memory.

So, the memory manager swaps in the process ready for execution, in the main memory, from the disk. The swapped out process is also brought back into the main memory when it again gets ready for execution.

Ideally, the memory manager swaps the processes so fast, that the main memory always has processes ready for execution.

Swapping of the processes also depends on the priority-based preemptive scheduling. Whenever a process with higher priority arrives the memory manager swaps out the process with the lowest priority to the disk and swaps in the process with the highest priority in the main memory for execution. When the highest priority process is finished, the lower priority process is swapped back in memory and continues to execute. This Variant of swapping is termed as roll-out, roll-in or swap-out swap-in.

Here, a question arises is that:

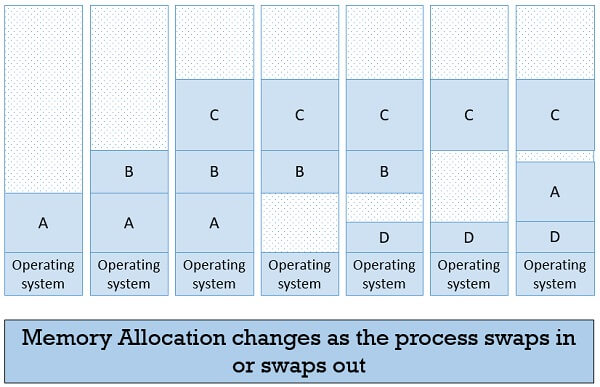

When a process is swapped out and is swapped in again, does it occupy the same memory space as it has occupied previously?

Well, answer to this depend on the technique of address binding. If the address binding is done statically i.e. while compiling or loading, then it is difficult to relocate the process in memory when it is swapped in again to resume its execution. In case, the binding is done at the execution time then the process can be swapped into the different location in memory as here the physical addresses are computed during the execution.

As in the image below you can see that a process A, when swapped back again in main memory to resume its execution is allocated a new memory address. Here, the address binding is done at the execution time.

So far, we have talked about the main memory in the context of swapping. Now, let us discuss what is the scenario at the backing storage or disk. The disk must have enough space to accommodate swapped out process images for all users. There are two alternatives to keep the swapped out process images on disk.

- The first alternative is to create a separate swap file for each swapped out process. But, this method will increase the number of files and directory entries. This increased overhead will deteriorate the search time for any I/O operation.

- The second alternative is to create a common swap file that can be kept on the disk and the location of each swapped out process image has to be noted in that common swap file. Here, initially, the size of the swap file must be estimated. As the size of the swap file will decide the number of processes that can be swapped out.

Whatever may be the method the memory space in disk, reserved for swapping must be larger than the demand paging.

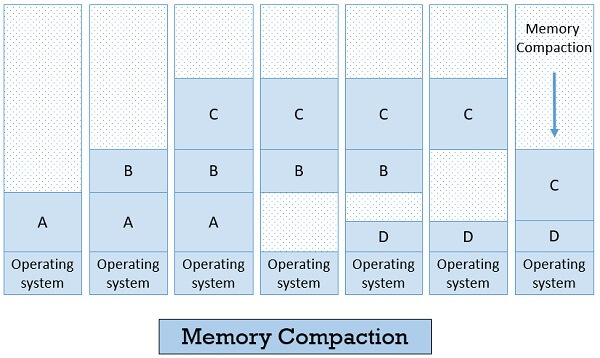

The term memory compaction comes across while studying swapping. When the process swap in and swap out it creates multiple holes into the memory. Well, it is possible to combine all the holes together to create big memory space. This can be done by moving all the processes downwards as far as possible. But, doing this will require a lot of CPU times. So typically this technique is not used.

Moving further with the swapping let us discuss,

How much memory should be allocated to a process while it is created or swapped in the memory?

The answer depends on the two following points:

- Whether the process is created with a fixed size that can never change.

- Or the processes’ data segment can grow while running.

If the process is created with the fixed size, then it is not the big deal, the operating system will allocate the exact amount of memory space no more no less.

If the process will grow during the run, then it is better to add little extra space to the process while swapping in or out. As this will reduce the overhead of shifting the process to a new space if the process is no longer fitting into the allocated memory.

So finally after learning all about swapping, we can say that the swapping is a process that occurs when the amount of main memory reaches a critically low point then the processes in main memory are temporarily swapped out to the disk. The swapped out process is swapped in back to the memory when it gets ready for execution.

Alisha khan says

very helpful article

Carl says

Very helpful, hope this will work on usb