Cache Coherence assures the data consistency among the various memory blocks in the system, i.e. local cache memory of each processor and the common memory shared by the processors. It confirms that each copy of a data block among the caches of the processors has a consistent value.

In this section, we will discuss the cache coherence problem and the protocol for resolving the cache coherence problem.

Content: Cache Coherence in Computer Architecture

What is Cache Coherence Problem?

In a multiprocessor environment, all the processors in the system share the main memory via a bus. Now, keeping a common cache for all the processors will enhance the size of the cache thereby slowing down the performance of the system.

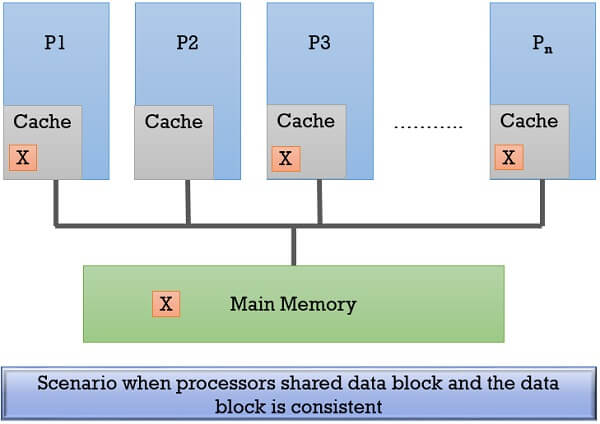

For better performance, each processor implements its own cache. Processors may share the same data block by keeping a copy of this data block in their cache. The figure below shows how processors P1, P3 & Pn have the copy of shared data block X of main memory in their caches.

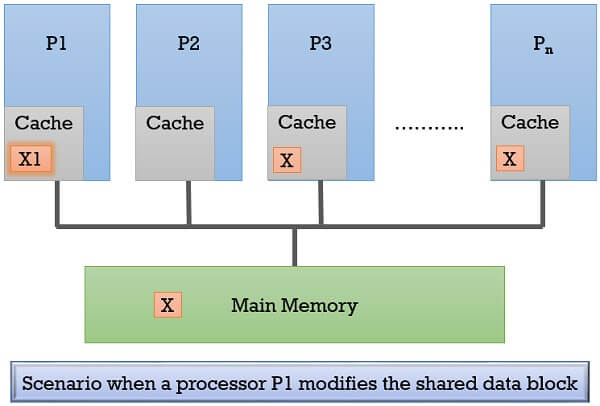

In case, the processor P1 modifies the copy of shared memory block X present in its cache. It would result in data inconsistency. As the processor P1 will have the modified copy of the shared memory block i.e. X1. But, the main memory and other processors’ cache will have the old copy of the shared memory block X. And this problem is the cache coherence problem.

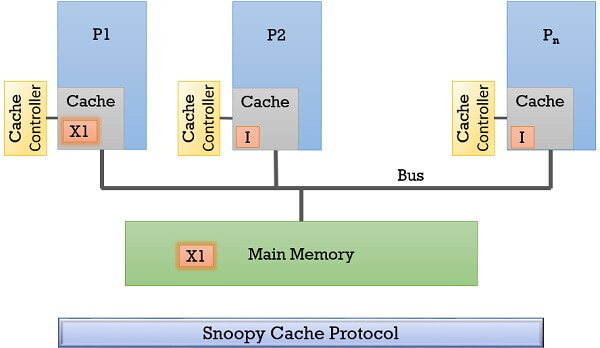

The figure below shows the cache coherence problem in a multiprocessing environment.

Well, this cache coherence problem can be sorted using the protocols discussed below. But before getting into the protocols we will discuss some terminologies associated to cache coherence problem.

States of Memory Block in Cache memory

If we talk about the cache memory it is subdivided into a number of blocks. And whenever a processor requires a data block it first checks it in its own cache memory. If it is not there the data is retrieved from the main memory and a copy of it is placed in the cache block.

Now to maintain the cache coherency the cache controller maintain some information to keep the the caches of other processors in the system synchronized, while a processor is modifying its copy of data that is also shared by other processors in the system. So the cache controller maintains the state for every cache block of the cache memory which helps in maintaining the coherency.

Let us discuss them one by one:

- Modify (M): The data block in a cache is modified and the processor modifying the data block is the owner of that data block. This copy of the data block is not available with any other caches in the system.

The main memory copy for the same data block does not contain the modified value of the data block. If the processor wants to modify it again, it doesn’t need to broadcast this request over the bus again. - Exclusive (E): When the processor wants to modify a data block in its cache, it broadcast the request to invalidate the copy of the same data block in other caches.

So, the data block to be modified is now only with the processor that wishes to modify it and with the main memory. Here, the processor is the exclusive owner of the data block. - Shared (S): A data block in the main memory is shared by many processors in the system and all the processors have a valid copy of the data block in their caches.

- Invalid (I): The cache has a data block that does not have valid data. If it wants to read or write/modify this data block it has to send a request to the owner of the same data block.

Cache Coherence Protocols

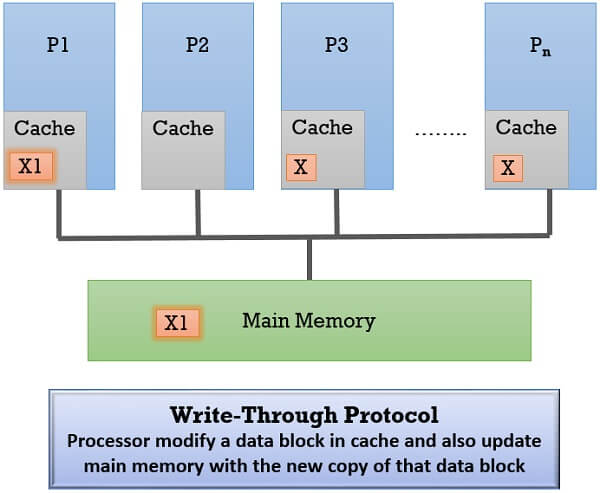

1. Write-Through Protocol

In write-through protocol when a processor modifies a data block in its cache, it immediately updates the main memory with the new copy of the same data block. So, the main memory here always has consistent data.

The write-through protocols have two versions and those are:

- Updating Values in Other Caches

- Invalidating Values in Other Caches.

Updating Values

Let us understand the first version where the inconsistent copies of shared data are updated in other caches.

- Whenever a processor modifies a shared data block in its cache, it immediately updates the same data block in the main memory.

- Now other processors with the same data block present in their cache will have inconsistent data. So the processor that has modified the shared data block, broadcast the modified data to all the other processors in the system.

- When the other processors in the system receive the broadcasted modified data they verify whether they have the same data block present in their cache. If yes, the content of that data block is modified as specified in broadcasted data else the broadcasted data is discarded.

Invalidating Values

Now, let us see the second version where the inconsistent copies in other processors caches are invalidated.

- Whenever a processor modifies a data block present in its cache memory, it immediately updates the same data block in the main memory.

- The processor modifying the data block broadcast request to other processors present in the system to invalidate the copies of the same data block in their caches.

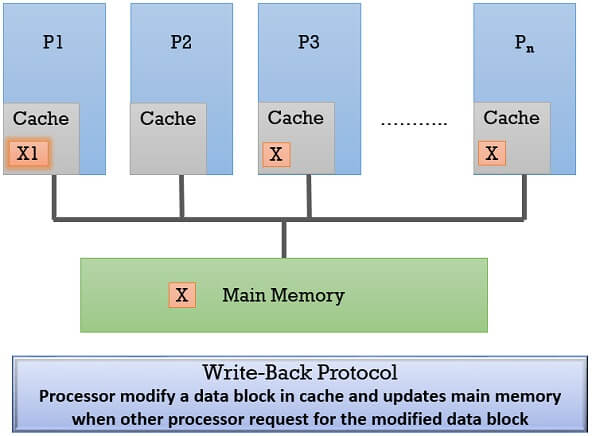

2. Write-Back Protocol

This protocol permits the processor to modify a data block only if it acquires ownership.

Steps to Acquire Ownership

- Initially, the memory is the owner of all the data blocks and it retains that ownership when a processor reads a data block and sites its copy in its cache.

- When a processor wants to modify a data block in its cache it has to confirm that it is an exclusive owner of that data block.

- For this, it has to first invalidate the copies of this data block in the other caches by broadcasting an invalidating request to all processors.

- Once it has become the exclusive owner, it can modify the data block.

- If any processor wants to read this modified data block it has to send the request to the current owner processor of that data block.

- The owner forwards the data to the requesting processor and to the main memory.

- The main memory updates the content of the data block that has been modified and reacquires its ownership again over the data block.

If any processor requires this data block it will be serviced by the main memory.

Modify Data

If another processor in the system wishes to modify/write the data block that has been modified. It sends a request to the current owner. The current owner sends the data and control over the block to the requesting processor.

Now, the requesting processor is the owner. It modifies the data block and also services the other processor’s request for the data block. Here the modified data block is not updated in the main memory. Since only the owner is authorized to modify the data block.

3. Snoopy Protocol

In the multiprocessor environment, all the processors are connected to memory modules via a single bus. The transaction between the processors and the memory module i.e. read, write, invalidate request for the data block occurs via bus.

If we implement the cache controller to every processor’s cache in the system, it will snoop all the transactions over the bus and perform the appropriate action. So, we can say that the Snoopy protocol is the hardware solution to the cache coherence problem.

It is used for small multiprocessor environments as the large shared-memory multiprocessors are connected via the interconnection network.

Consider a scenario from write-back, if a processor has just modified a data block in its cache, and is a current owner of the block.

Now, if processor P1 wishes to modify the same data block that has been modified. P1 would broadcast the invalidation request on the bus and becomes the owner for that data block and modify the data block. The other processors who have the copy of the same data block snoop the bus and invalidate their copy of the data block (I). It updates memory using the write-back protocol.

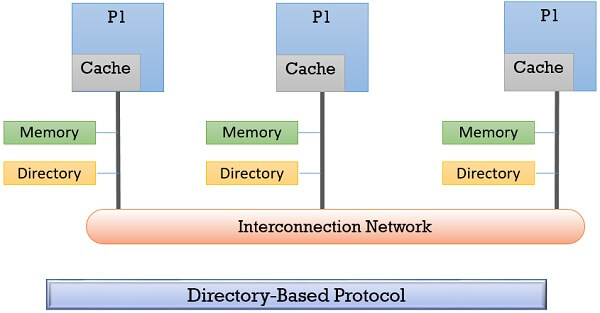

4. Directory-Based Cache Coherence Protocol

Directory-Based cache coherence protocol is a hardware solution to the cache coherence problem. It is implemented in a large multiprocessor system where the shared memory and processors are connected using the interconnection network.

The directories are implemented in each memory module of the multiprocessors system. These directories keep the record of all the actions taken to each data block i.e. whether a data block in the cache of a processor is invalid, or is being modified, or is in the shared state. Due to its cost and complexity directory-based cache coherence protocols are implemented only to large multiprocessors systems.

Key Takeaways

- Cache coherence promises data consistency among all the memory blocks in the system (cache memory of various processors and the main memory).

- Whenever a processor modifies a data block in its cache, the copies of the same data block in other caches and the memory are not updated. So, the other caches would have the old copy of the same data block. This leads to data inconsistency and it’s a cache coherence problem.

- We have the protocols to maintain the cache coherence in the system like write-through protocol, write-back protocol, snoopy protocol, and directory-based protocol.

- Along with the protocol mentioned above, there are several other approaches to maintain cache coherence in the system.

So, cache coherence is one of the important things to be maintained by the processor. Ignoring it wouldn’t work and will shows disastrous results.

Leave a Reply