Data cleaning is the technique used to eliminate the inconsistencies and irregularities in the data. Redundant or irrelevant data only increase the amount of storage. So, it is very important to clean the data as the inaccurate data not only confuses the data mining programs but also degrades the quality of data.

In this section, we will discuss data mining in brief along with that we will also discuss the process of data cleaning. Further, we will discuss the tools used for data cleaning.

Content: Data Cleaning in Data Mining

- What is Data Cleaning?

- Why Data Cleaning is Important?

- Steps in Data Cleaning

- Data Cleaning Tools

- Key Takeaways

What is Data Cleaning?

Data cleaning is a process that is performed to enhance the quality of data. Well, it includes normalizing the data, removing the errors, soothing the noisy data, treat the missing data, spot the unnecessary observation and fixing the errors.

Generally, the data obtained from the real-world sources are incorrect, inconsistent, has errors and is often incomplete. So, such low-level data needs to be cleaned before data mining.

Why Data Cleansing is Important?

Data cleaning is important for both an individual as well as an organization. As the company grows it accumulates a lot of data. Searching for a particular file you have to wade through several files and you may not even get the file as a disorganized data require a lot of effort and time.

Clean and organized data help the executives and managers of the organization in making decisions that can boost the productivity of the organization.

Clean data also saves time and money. As good decisions would let a successful marketing campaign which helps in customer acquisition which in turn would result in increased sales and revenue. Knowing the importance let us move towards the steps required in data cleaning.

Steps in Data Cleaning

The data collected from real-world have several kinds of inconsistencies, each of which could be treated separately. But before treating the inconsistent data it should be detected that from where and why the data has become inconsistent.

1. Identify Inconsistent Data

The inconsistency in data may be due to various reasons like the data entry form is designed with several optional fields which let the candidates fill incomplete information. The candidates may have made a mistake while entering the data. Some of the data may be out of date like people change their address, phone number etc. These might be the reason for the inconsistent data.

2. Handle Missing Values

If there is a tuple whose several attribute values are missing then that tuple is ignored. But, by ignoring such tuples also let us ignore remaining attributes that have valid values which may be useful in some way.

Further, the missing attribute values can be filled manually but this idea doesn’t work if you have to do it for a large data set that has many missing attribute values, it will be a costly and time-consuming process.

Well, a constant ‘unknown’ can be used to replace the missing values. But, the data mining program can mistakenly interpret this as an interesting concept as all the missing values now have the common value ‘unknown’. Though it is a simple but not a promising method.

To fill the missing values of the attribute you can use the concept of ‘measure of central tendency’. This concept says use means for symmetric data distribution and for skewed data distribution use median. Well, most of the methods use the existing data to guess the missing values.

3. Smoothing Noise in Data

Before getting into the method to remove noise in the data let us understand what do we mean by the noisy data?

Noisy data contains meaningless information. The term noisy data is often used for the term corrupt data. Well, noisy data cannot be read by the data mining program to yield useful statistics. Noisy data increase the amount of data in the data warehouse, which should be effectively removed to facilitate data mining.

Let us discuss the techniques to remove noisy data.

- Binning

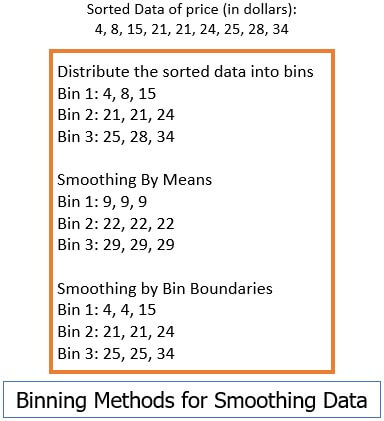

This method is used to polish the sorted data values, considering their neighbouring values. The sorted data values are put into the number of buckets and considering the neighbouring values in each bin, the local smoothing is performed.

In the image below you can see some binning techniques performed on the sorted data.

In the binning technique using means, all values in bins are substitutes by the mean value. In the binning technique using boundary values, all the values in the bin are substituted by closest boundary values.

In the binning technique using means, all values in bins are substitutes by the mean value. In the binning technique using boundary values, all the values in the bin are substituted by closest boundary values. - Regression

Regression is used to smooth noisy data. Regression matches the data values to a function such as linear regression which identifies the relationship between two variables so that, one attribute helps in identifying the value of another attribute. - Outlier Analysis

The outlier values could be analyzed with the method of clustering. The similar valued are grouped to a cluster and the values that are loosely related, lies outside the cluster and this is how outlier can be detected.

Data Cleaning Tools

1. Data Scrubbing Tool

The data scrubbing tool utilize the domain knowledge to identify the errors. Using this domain knowledge, it also rectifies the data. Parsing and fuzzy matching are the techniques adopted by this tool while cleaning the data.

2. Data Auditing Tool

The data auditing tool identifies the rule and relationship between the data and then analyze the data to discover, which data is violating these rules.

3. Data Migration Tool

The data migration tool transfers the data from one storage to another. This tool extracts the data from storage, transforms it and then load it to other storage.

Key Takeaways

- The data might be collected from multiple sources and there may be discrepancies or inconsistencies in the data.

- Data cleaning is done to improve the quality of data and support the data-mining program.

- Data cleaning is important because the clean data eases data mining and helps in making a successful strategic decision.

- Data cleaning involves tackling the missing data and smoothing noisy data.

- Noisy data can be smoothen using the binning technique, regression and analyzing the outlier data.

- Data cleaning can also be performed using data cleaning tools.

So, this is how the data in the data warehouse is cleaned before the data mining process. It is important to clean data as the data collected by the enterprise over the years may have inconsistencies which should be cleared to get an accurate idea of clean data which help them in making the strategic decision.

Pramila says

This information has made me understand each and every words in a easy and simple manner.

For a beginner it is very good