An interrupt in computer architecture is a signal that requests the processor to suspend its current execution and service the occurred interrupt. To service the interrupt the processor executes the corresponding interrupt service routine (ISR). After the execution of the interrupt service routine, the processor resumes the execution of the suspended program. Interrupts can be of two types of hardware interrupts and software interrupts.

Content: Interrupts in Computer Architecture

- Types of Interrupts

- Interrupt Cycle

- Interrupt Latency

- Enabling and Disabling Interrupts

- Handling Multiple Devices

- Priority Interrupts

- Controlling I/O Device Behaviour

Types of Interrupts in Computer Architecture

The interrupts can be various type but they are basically classified into hardware interrupts and software interrupts.

1. Hardware Interrupts

If a processor receives the interrupt request from an external I/O device it is termed as a hardware interrupt. Hardware interrupts are further divided into maskable and non-maskable interrupt.

- Maskable Interrupt: The hardware interrupt that can be ignored or delayed for some time if the processor is executing a program with higher priority are termed as maskable interrupts.

- Non-Maskable Interrupt: The hardware interrupts that can neither be ignored nor delayed and must immediately be serviced by the processor are termed as non-maskeable interrupts.

2. Software Interrupts

The software interrupts are the interrupts that occur when a condition is met or a system call occurs.

Interrupt Cycle

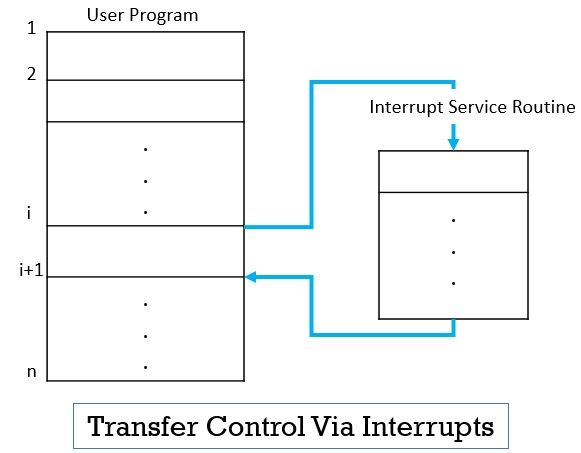

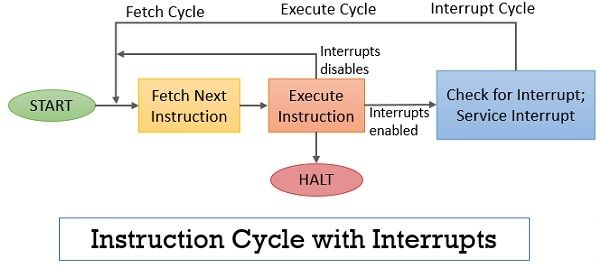

A normal instruction cycle starts with the instruction fetch and execute. But, to accommodate the occurrence of the interrupts while normal processing of the instructions, the interrupt cycle is added to the normal instruction cycle as shown in the figure below.

After the execution of the current instruction, the processor verifies the interrupt signal to check whether any interrupt is pending. If no interrupt is pending then the processor proceeds to fetch the next instruction in the sequence.

If the processor finds the pending interrupts, it suspends the execution of the current program by saving the address of the next instruction that has to be executed and it updates the program counter with the starting address of the interrupt service routine to service the occurred interrupt.

After the interrupt is serviced completely the processor resumes the execution of the program it has suspended.

What is Interrupt Latency?

As we know to service the occurred to interrupt the processor suspends the execution of the current program and save the details of the program to maintain the integrity of the program execution. The modern processor store the minimum information that will be needed by the processor to resume the execution of the suspended program. Still, the saving and restoring of information from memory and registers which involve memory transfer increase the execution time of the program.

Transfer of memory also occurs when the program counter is updated with the starting address of the interrupt service routine. This memory transfer causes the delay between the time the interrupt was received and the processor starts executing the interrupt service routine. This time delay is termed as interrupt latency.

Enabling and Disabling Interrupts in Computer Architecture

Modern computers have facilities to enable or disable interrupts. A programmer must have control over the events during the execution of the program.

For example, consider the situation, that a particular sequence of instructions must be executed without any interruption. As it may happen that the execution of the interrupt service routine may change the data used by the sequence of instruction. So the programmer must have the facility to enable and disable interrupt in order to control the events during the execution of the program.

Now you can enable and disable the interrupts on both ends i.e. either at the processor end or at the I/O device end. With this facility, if the interrupts are enabled or disabled at the processor end the processor can accept or reject the interrupt request. And if the I/O devices are allowed to enable or disable interrupts at their end then either I/O devices are allowed to raise an interrupt request or prevented from raising an interrupt request.

To enable or disable interrupt at the processor end, one bit of its status register i.e. IE (Interrupt Enable) is used. When the IE flag is set to 1 the processor accepts the occurred interrupts. IF IE flag is set to 0 processor ignore the requested interrupts.

To enable and disable interrupts at the I/O device end, the control register present at the interface of the I/O device is used. One bit of this control register is used to regulate the enabling and disabling of interrupts fro the I/O device end.

Handling Multiple Devices

Consider the situation that the processor is connected to multiple devices each of which is capable of generating the interrupt. Now as each of the connected devices is functionally independent of each other, there is no certain ordering in which they can initiate interrupts.

Let us say device X may interrupt the processor when it is servicing the interrupt caused by device Y. Or it may happen that multiple devices request interrupts simultaneously. These situations trigger several questions like:

- How the processor will identify which device has requested the interrupt?

- If the different devices requested different types of interrupt and the processor has to service them with different service routine then how the processor is going to get starting address of that particular to interrupt the service routine?

- Can a device interrupt the processor while it is servicing the interrupt produced by another device?

- How can the processor handle if multiple devices request the interrupts simultaneously?

How these situations are handled vary from computer to computer. Now, if multiple devices are connected to the processor where each is capable of raising an interrupt the how will the processor determine which device has requested an interrupt.

The solution to this is that whenever a device request an interrupt it set its interrupt request bit (IRQ) to 1 in its status register. Now the processor checks this IRQ bit of the devices and the device encountered with IRQ bit as 1 is the device that has to raise an interrupt.

But this is a time taking method as the processor spends its time checking the IRQ bits of every connected device. The time wastage can be reduced by using a vectored interrupt.

Vectored Interrupt

The devices raising the vectored interrupt identify themselves directly to the processor. So instead of wasting time in identifying which device has requested an interrupt the processor immediately start executing the corresponding interrupt service routine for the requested interrupt.

Now, to identify themselves directly to the processors either the device request with its own interrupt request signal or by sending a special code to the processor which helps the processor in identifying which device has requested an interrupt.

Usually, a permanent area in the memory is allotted to hold the starting address of each interrupt service routine. The addresses referring to the interrupt service routines are termed as interrupt vectors and all together they constitute an interrupt vector table. Now how does it work?

The device requesting an interrupt sends a specific interrupt request signal or a special code to the processor. This information act as a pointer to the interrupt vector table and the corresponding address (address of a specific interrupt service routine which is required to service the interrupt raised by the device) is loaded to the program counter.

Interrupt Nesting

When the processor is busy in executing the interrupt service routine, the interrupts are disabled in order to ensure that the device does not raise more than one interrupt. A similar kind of arrangement is used where multiple devices are connected to the processor. So that the servicing of one interrupt is not interrupted by the interrupt raised by another device.

What if the multiple devices raise interrupts simultaneously, in that case, the interrupts are prioritized.

Priority Interrupts in Computer Architecture

The I/O devices are organized in a priority structure such that the interrupt raised by the high priority device is accepted even if the processor servicing the interrupt from a low priority device.

A priority level is assigned to the processor which can be regulated using the program. Now, whenever a processor starts the execution of some program its priority level is set equal to the priority of the program in execution. Thus while executing the current program the processor only accepts the interrupts from the device that has higher priority as of the processor.

Now, when the processor is executing an interrupt service routine the processor priority level is set to the priority of the device of which the interrupt processor is servicing. Thus the processor only accepts the interrupts from the device with the higher priority and ignore the interrupts from the device with the same or low priority. To set the priority level of the processor some bits of the processor’s status register is used.

Controlling I/O Device Behaviour

It is not mandatory that the processor must service the interrupts from all the device it is connected to. To control the devices from generating the interrupt a mechanism is built up in the interface of the devices which would let the device raise an interrupt only when the processor is willing to service the interrupt from that device. This can be implemented by providing the interrupt enable bit in the interface circuit of the device.

So this is all about the interrupts in computer architecture.

Leave a Reply